If you’ve worked with the Hortonworks Data Platform 2.x sandbox of later versions in VirtualBox and made it shutdown rather vigorously, you might have noticed that you won’t get past this startup screen when you try to start it up the next time:

I had this a couple of times and that’s why I decided to pause my sandbox every time and save it before shutting down my laptop. But yesterday Windows 10 decided to step in. After a day of studying it was high time for me to have dinner, during which I kept the laptop on. Little did I know that Windows 10 at that time decided to update and restart. And to do this, it needed to shutdown every application. Including VirtualBox. When I came back I found out to my horror that my carefully prepared HDP sandbox was shutdown in the roughest of ways. Thanks, Microsoft!

I started the HDP 2.6.1 sandbox again, but.. that startup screen never went further. I had the option to start again fresh by importing the VirtualBox anew, transfering my files again, creating my Hive databases again… No! I decided to put a line in the sand. This HDP sandbox will live! “Don’t go into the light.. er.. Horty!”

Let’s ask Google

When you google “hdp sandbox hangs” or “hdp sandbox won’t restart” you almost certainly arrive at the Hortonworks Community. There is some advise there that means you have to start again on a different platform (No!) and some tips to diagnose the situation. For example, you can see how far CentOS got during the startup by pressing an cursor key.

Now at least you have a better way to describe how far the startup got.

Even more valuable was this advise from Hortonworks Community user Tiago Rubio that you can still access the sandbox in this situation.

Let’s access Ambari

Does this mean I can access Ambari? Yes, yes you can. Give the startup some time, then go to http://sandbox.hortonworks.com:8080 and sure enough – well I at least got to see this screen:

But the news is not all good. Almost nothing is up.

Start all up

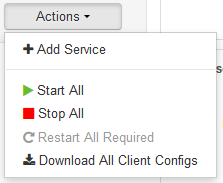

So my first intuition was to use the Actions -> Start All button.

Sure enough Ambari sets to work. Things will work out after all, you’ll see. That is until… you get the results back:

Click on the ![]() a couple of times and you get a list of what components started and which ones didn’t.

a couple of times and you get a list of what components started and which ones didn’t.

Notice that the startup of the (YARN) Resource Manager was aborted, as was the Node Manager and many other tasks. These are very important.

Is there a Hadoop engineer in the house?

It seems we have to start some stuff by hand. For this you need to know in what order to start things. First we go to the Dashboard and look at HDFS.

Ah, see Hadoop runs on HDFS. And here the NameNode and the DataNode of HDFS are stopped. Let’s click on the NameNode link. We now get a list of components on the sandbox.hortonworks.com server, which is the only server in this “cluster”.

Now let’s try to start the NameNode individually.

In my case it turned out it went stuck at 35% progress for quite a while. What was happening? A couple of clicks on ![]() and I found this message:

and I found this message:

2017-11-17 10:15:24,244 - Retrying after 10 seconds. Reason: Execution of '/usr/hdp/current/hadoop-hdfs-namenode/bin/hdfs dfsadmin -fs hdfs://sandbox.hortonworks.com:8020 -safemode get | grep 'Safe mode is OFF'' returned 1.

It took multiple minutes, but eventually the NameNode did start without my interference. You need a bit of patience here. Now on to the next component: the DataNode. You’ll find it a bit lower in the list. This one started pretty quickly.

We could do everything by hand from here on, but actually at this point I gave that Start All button a try again. And now 100% of the services start.

Going back to the Dashboard in Ambari I see only green marks:

Testing it

But does it really work? I want proof that what I was working on still works.

Here is Hive:

[root@sandbox ~]# su - spark [spark@sandbox ~]$ export SPARK_MAJOR_VERSION=2 [spark@sandbox ~]$ export HIVE_HOME=/usr/hdp/2.6.1.0-129/hive [spark@sandbox ~]$ hive log4j:WARN No such property [maxFileSize] in org.apache.log4j.DailyRollingFileAppender. Logging initialized using configuration in file:/etc/hive/2.6.1.0-129/0/hive-log4j.properties hive> show databases; OK asteroids default foodmart hdpcd_retail_db_orc hdpcd_retail_db_txt xademo Time taken: 2.137 seconds, Fetched: 6 row(s) hive> use asteroids; OK Time taken: 0.291 seconds hive> select count(*) from asteroids; OK 745470 Time taken: 0.662 seconds, Fetched: 1 row(s)

That is promissing. But let’s see Spark next:

[spark@sandbox ~]$ pyspark --master yarn-client --conf spark.ui.port=12562 --executor-memory 1G --num-executors 1

SPARK_MAJOR_VERSION is set to 2, using Spark2

Python 2.6.6 (r266:84292, Aug 18 2016, 15:13:37)

[GCC 4.4.7 20120313 (Red Hat 4.4.7-17)] on linux2

Type "help", "copyright", "credits" or "license" for more information.

Warning: Master yarn-client is deprecated since 2.0. Please use master "yarn" with specified deploy mode instead.

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

/usr/hdp/current/spark2-client/python/pyspark/context.py:207: UserWarning: Support for Python 2.6 is deprecated as of Spark 2.0.0

warnings.warn("Support for Python 2.6 is deprecated as of Spark 2.0.0")

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/__ / .__/\_,_/_/ /_/\_\ version 2.1.1.2.6.1.0-129

/_/

Using Python version 2.6.6 (r266:84292, Aug 18 2016 15:13:37)

SparkSession available as 'spark'.

>>> orders = sc.textFile("/user/dmaster/retail_db/orders")

>>> orders.count()

68883

So that all works great. I can do my experimenting again. Phew!

Are we really done?

One more thing. So the Ambari Dashboard looks apple green, but when you look at the components on the sandbox.hortonworks.com server it actually does not.

I haven’t checked out what status these components had before I brought my HDP sandbox back from the death. I know HDFS’ SNameNode won’t work on only one server, but some others might be necessary for what you are working on. I didn’t went as far as to test this, but you could try starting these components individually and if they don’t start, take a good look at the errors they produce.

Horty lives

So in the end my HDP sandbox is alive again, but the VirtualBox will stay in it’s startup screen. Consider this a bypass operation. Hopefully this helps you to save your work too.

2 Comments

Radovan · November 19, 2017 at 7:08 pm

Hi Marcel-Jan,

thanks for useful article about the issue with HDP. I had the same type of issue on VMWare, and I tried using your approach. All is fine and it works, but thinks will be good to refer to this page also: https://docs.hortonworks.com/HDPDocuments/HDP2/HDP-2.6.2/bk_reference/content/starting_hdp_services.html

When I used this commands in the order they suggested, everything up and running really fast. Of course, I just run commands I need. So, maybe this is the same approach for command line fans 🙂

Marcel-Jan Krijgsman · November 19, 2017 at 8:47 pm

Good tip. I could place a script to get everything running with these commands in it for the next restart. The main message is: you really don’t have to through away your sandbox and start again.