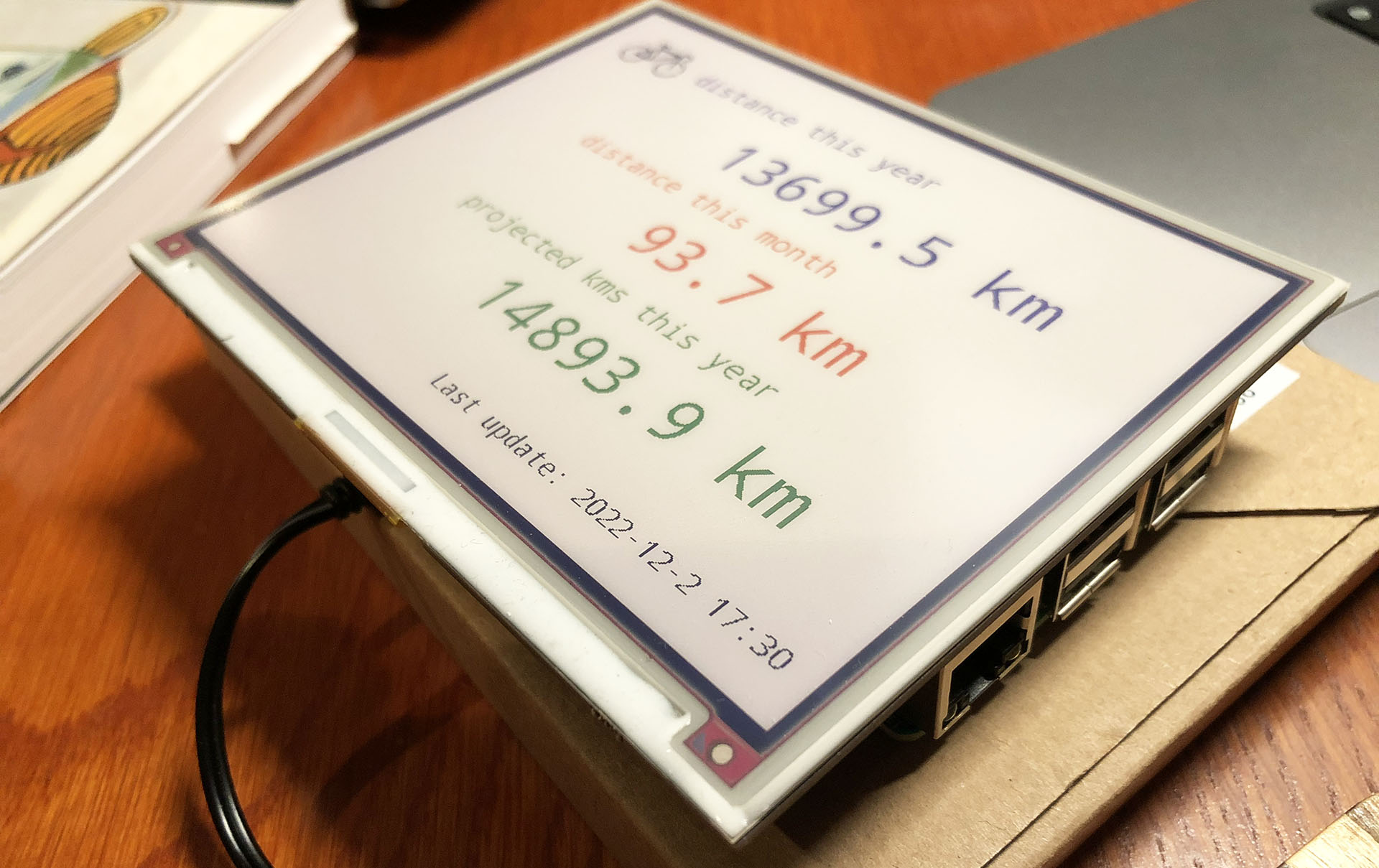

A Strava dashboard on a Raspberry Pi (Part 1): Setting up the Raspberry Pi

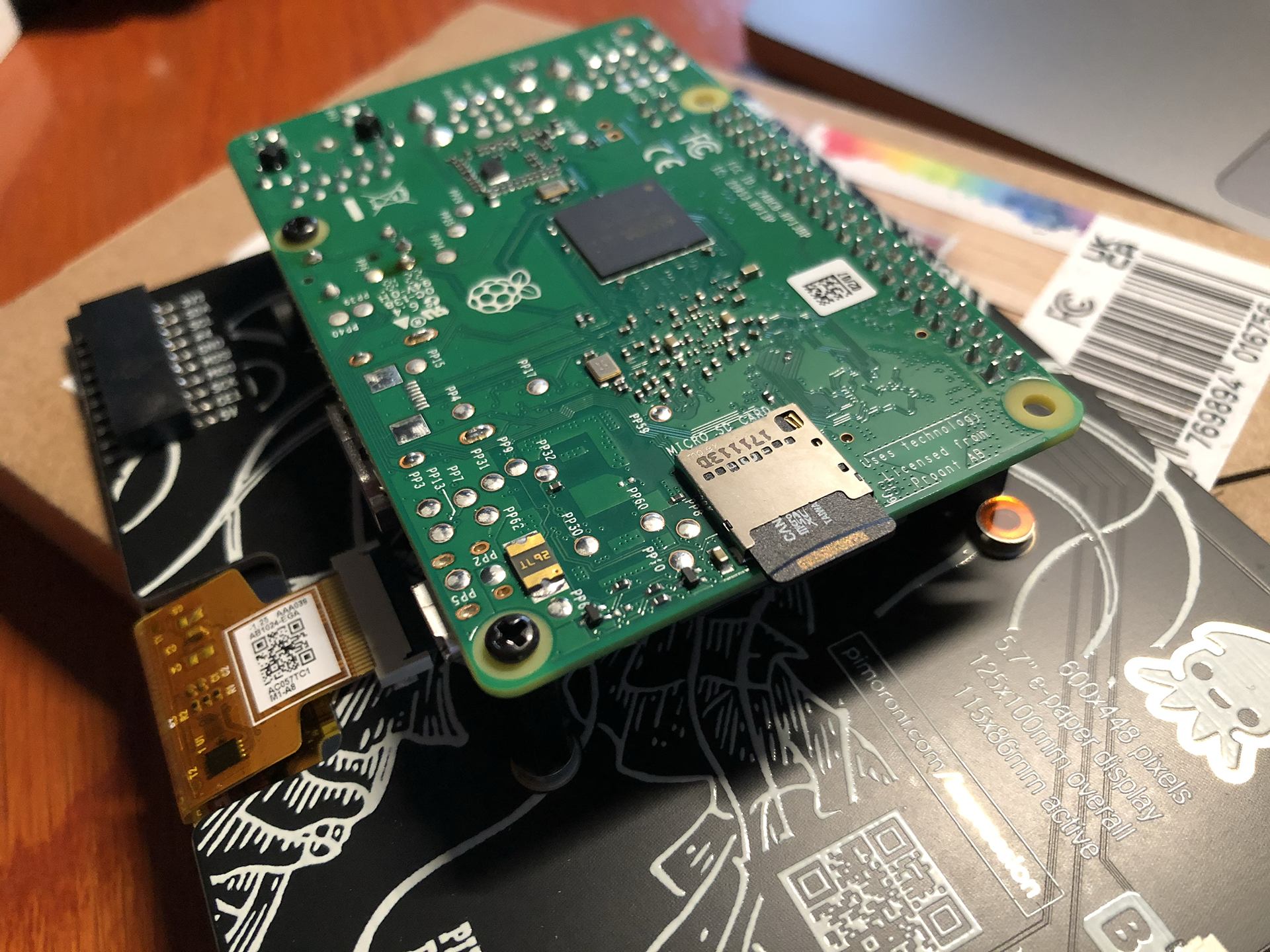

This is the list of hardware I’ve used:

- An Inky Impression 5.7 inch e-ink display.

- (The Inky Impression comes with a 40-pin female header included to boost height for full-size Pis and standoffs included to securely attach to your Pi)

- A Raspberry Pi 3 model B+ (I had lying around) + power supply

- A micro SD card with 8 GB storage or more.

- Initially: keyboard, mouse and monitor (but if you configure the WiFi on the Raspberry Pi and configure it to allow remote SSH, you can connect to it via WiFi from the convenience of your regular computer)

For those who don’t know a Raspberry Pi: this is a very small and quite cheap computer. The Raspberry Pi 3B+ I’ve used for example is about 40 euros. But you can spend even less, because my Strava dashboard doesn’t exactly require a lot of computing power.

So you could instead use a Raspberry Pi Zero 2 W (15-25 euros), which takes up less space also. But I believe this will require soldering to attach the GPIO. And it seems to be out of stock on a lot of sites.

(more…)